Insurance fraud through manipulated MRI & X-ray images, how great is the risk?

For a long time, medical image data was regarded as a reliable "source of truth". X-rays, MRIs and CT scans provided seemingly unambiguous evidence for diagnoses and formed the basis for benefit decisions in the insurance industry. However, this status is increasingly being shaken as manipulated X-ray images pose a new challenge for insurers.

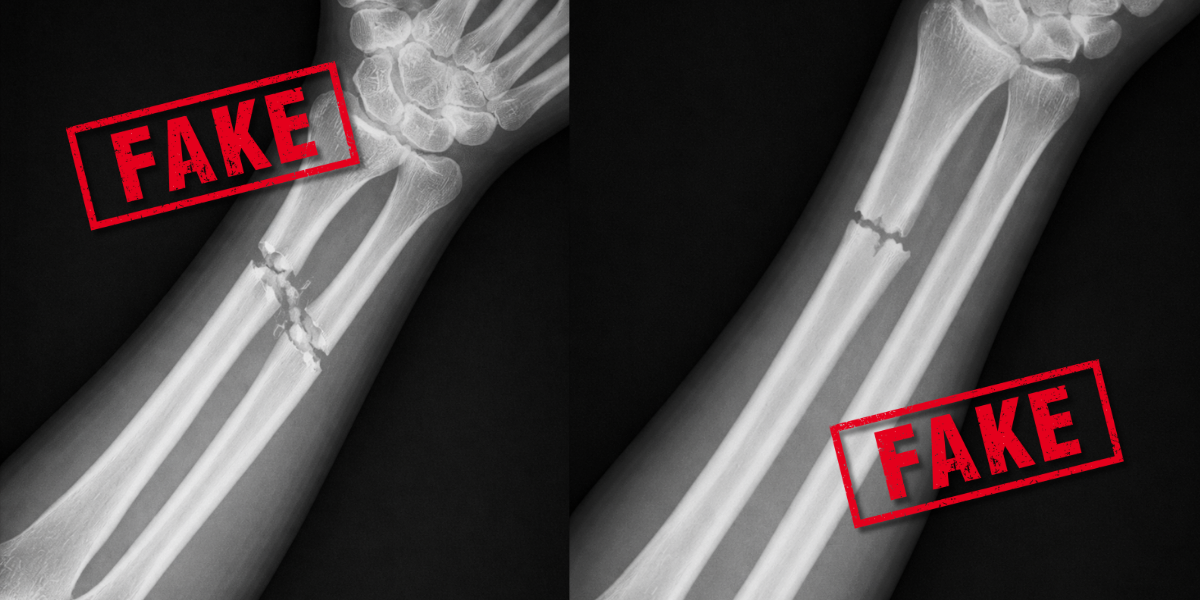

AI X-ray images can be so convincing that even experienced radiologists are often unable to distinguish them from real images. The extent of this new problem is shown by recent reports such as "Deceptively real: AI fakes X-ray images - even radiologists fall for it" in the Frankfurter Rundschau and other media.

For health and life insurers, this means risks in the millions, as falsified findings trigger benefits that are not medically justified. Traditional manual checks by claim handlers or medical experts are increasingly no longer sufficient.

This is exactly where fraudify comes in: with the help of specialized image forensics, even the smallest irregularities in medical image data are reliably detected. We show how insurers, medical professionals and IT security managers can protect themselves against manipulated X-ray images.

What are manipulated X-ray images?

Manipulated X-ray images are medical images that are deliberately altered or completely recreated with the help of artificial intelligence or image processing. The aim is usually to fake or conceal illnesses in order to gain financial advantages. Thanks to the rapid progress of AI, it is now possible to create or alter images that are almost indistinguishable from the original images. What began as a "deepfake" in social networks has long since reached critical areas. Insurance companies are therefore facing a new dimension of billing fraud.

Can experienced radiologists recognize deepfake MRI & X-ray images?

The relevance was highlighted by a study published in the journal Radiology (Tordjman, Yuce et al.). This impressively shows how realistic AI-generated X-ray images have become, which is why the German medical journal Ärzteblatt also issued a warning:

Deceptively real images: Modern AI models produce anatomically plausible images that can hardly be distinguished from real ones.

Uncertainty among specialists: In a study of 17 radiologists from six countries, only 41% of AI images were spontaneously recognized as fakes - unless this was pointed out in advance.

Experience does not protect: Professional experience had no significant influence on the recognition rate.

Low barriers to entry: Deceptively real images can now be generated by simply entering text - without specialist knowledge.

How good are traditional AI systems?

Particularly explosive: even the AI systems that generate such images do not reliably recognize their own fakes. In the study, the model used only correctly identified around 85% of its own generated content. Neither classic AI models nor experienced doctors can meet this challenge on their own. Absolute certainty is therefore only possible with specialized image forensics systems such as fraudify.

Are there typical indications of falsified X-ray images?

Typical characteristics of deepfake X-ray images are excessively smooth bones and unnaturally straight spines. Nevertheless, the quality of the artificial images is constantly increasing, regardless of the body region, which makes recognition more difficult. The unrecognizable deepfake X-ray images jeopardize diagnostic reliability, undermine trust in digital patient records and are used for perfidious insurance fraud.

Excursus: Can manipulated radiological images pose a threat to the cybersecurity of medical facilities?

In addition to classic billing fraud, there is another, often underestimated danger: targeted cyberattacks on hospitals using manipulated radiological image data. Attackers could, for example, infiltrate clinical systems and alter X-ray, MRI or CT images in such a way that misdiagnoses are encouraged or treatments are deliberately misdirected.

In addition, doctors are legally obliged to keep truthful treatment records; manipulations violate this obligation and can lead to a reduction in the burden of proof in liability proceedings.

Although an article in Ärzte Zeitung from 2018 states that such attacks are considered unlikely, the perfection that AI-generated images have achieved in the meantime shows that this danger is quite real today. Experts warn of increasingly professional methods that specifically exploit human vulnerabilities in organizations.

Identifying manipulated X-ray images is therefore a growing challenge for cyber security in healthcare and medicine.

Billing fraud - how big is the problem?

Billing fraud is causing increasingly high losses in statutory health and long-term care insurance, as reported by the National Association of Statutory Health Insurance Funds (GKV Spitzenverband). After a decline at the beginning of the pandemic, the number of cases and loss amounts have recently risen significantly: In 2022 and 2023, losses amounted to over 200 million euros (previously 132 million euros in 2020/2021). At the same time, the number of reports of possible fraud cases increased by around 21%. On a positive note, however, almost half of the outstanding receivables - around 92 million euros - were secured.

Experts nevertheless assume that the number of unreported cases is high. Billing fraud deprives the healthcare system of considerable funds that are actually needed for patient care.

The use of artificial intelligence (AI) is seen as a key lever in curbing this development. Our aim is to detect fraud structures at an early stage, reduce losses and better protect the solidarity of those paying contributions.

Why are health and life insurers now exposed to a particular risk?

The manipulation of medical image data is not a theoretical problem, but a direct threat to the economic stability of insurers.

In health insurance:

Billing for treatments that are not necessary

Chain reactions due to subsequent billing based on a single falsified image

In life and disability insurance:

Unauthorized pension payments over decades

High one-off payments due to faked serious illnesses

If such manipulations are not recognized, this leads to considerable financial misallocations and jeopardizes competitiveness in the long term.

What role do manipulated or falsified documents play?

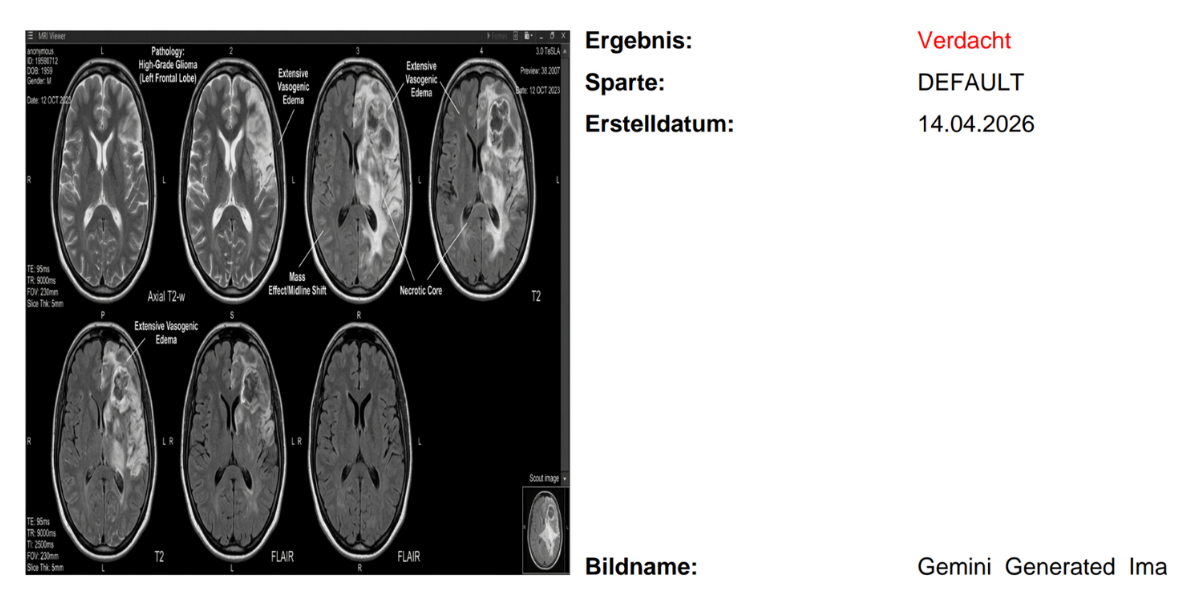

Manipulation rarely ends with the image alone. It is often an orchestrated interplay of several forged elements. Modern language models make it possible to create deceptively genuine accompanying documents:

Medically plausible findings: diagnoses match the manipulated images exactly and imitate the style of experienced medical specialists.

Formal perfection: Letterheads, stamps and signatures look authentic and pass visual tests.

Scalability: Fraud can be automated and carried out on a large scale.

How can insurers protect themselves against AI-generated images and falsified documents?

One thing is clear: the integrity of medical image data can no longer be taken for granted. With the increasing spread of powerful AI, the risk of systematic billing fraud is also growing.

For insurers, this means that the use of specialized image forensics software is no longer an optional upgrade, but a necessary prerequisite for effective fraud management.

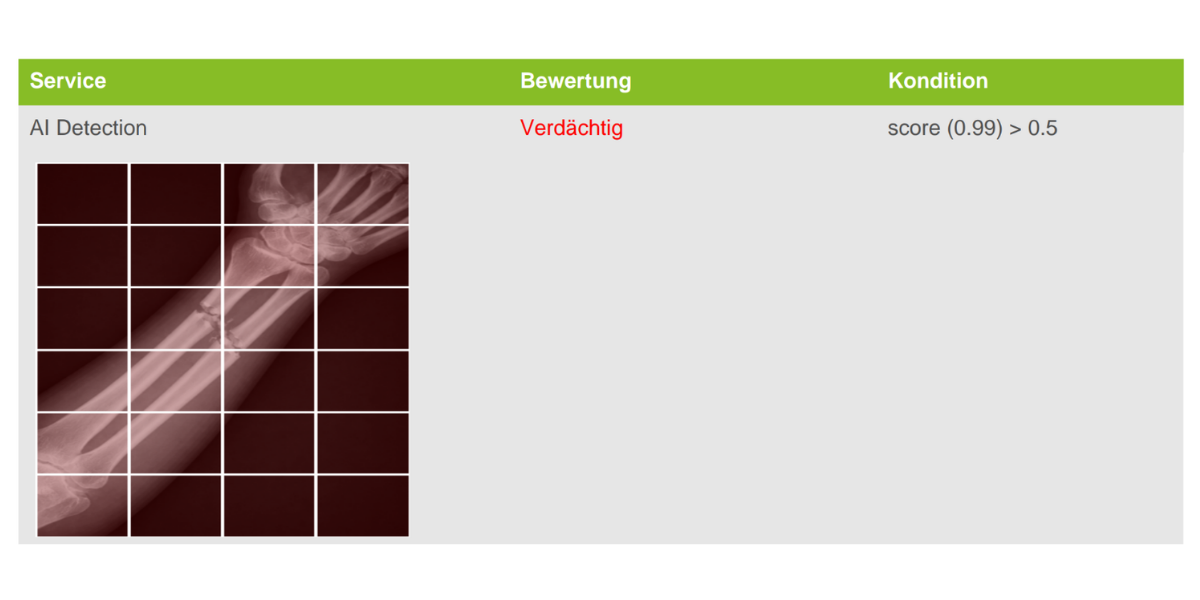

fraudify offers a multi-layered approach for this:

Image forensic analysis: Examination down to pixel level to make even the finest manipulations visible

Deepfake detection: identification of typical AI artefacts and structural inconsistencies

Metadata inspection: analysis of timestamps, device information and processing traces

Backward search on the internet: Detection of images that have been used multiple times or taken out of context

Duplicate detection: Identification of similar or modified images across different cases

Document forensics : Optional integration of document forensics by a partner company

Talk to us: Find out how fraudify optimizes processes, reduces risks and detects attempted fraud at an early stage. We would be happy to demonstrate how integration into existing audit trails can look in practice.

FAQ - Frequently asked questions about manipulated X-ray images

Manipulated X-ray images are medical images that are deliberately altered or completely recreated with the help of artificial intelligence or image processing. The aim is usually to fake or conceal illnesses in order to gain financial advantages.

Today, modern AI systems can generate deceptively realistic medical images. These are often so realistic that even medical specialists have difficulty distinguishing them from real images. This significantly increases the risk of systematic fraud.

No, not always. Studies show that even experienced radiologists are often unable to reliably identify AI-generated images. Experience alone is therefore no longer enough to reliably detect manipulations.

AI facilitates both the creation of fake images and the generation of matching documents such as medical reports or invoices. This results in increasingly complex and difficult to recognize fraud patterns.

In some cases, yes - such as unnaturally smooth structures or anatomical inconsistencies. However, these features are increasingly being reduced by ever better AI models, so that they are often no longer visible.

In statutory health and long-term care insurance alone, losses run into the hundreds of millions. Experts also assume a high number of unreported cases.

Yes, in addition to fraud scenarios, targeted cyberattacks are also conceivable in which medical image data is manipulated in order to influence diagnoses or treatments.

The most effective protection lies in the use of specialized technologies. Image forensics solutions such as fraudify automatically analyze medical images and detect even the smallest manipulations that remain invisible to humans.

The quality of AI-generated content is now so high that visual checks alone are no longer reliable. Automated, data-based analyses are necessary to detect fraud at an early stage.

fraudify combines various inspection methods such as pixel analysis, deepfake detection, metadata checks and reverse Internet searches. This allows manipulated images and related fraud patterns to be identified and stopped at an early stage.